The future of training is here with extended reality.

We harness the power of human curiosity to create brain-friendly training that sticks. We make innovation easy for Federal organizations, offering extended reality (XR) solutions that meet Federal security standards and enact genuine, trackable behavioral change. Whether you’re looking to begin a digital training program or enhance your learning experience, we can help.

Virtual Reality Training

We create tailor-made experiences that are customizable to your specific needs. Instruction and guidance can come in the form of audio, contextual 2D text boxes, or all the way to fully realized virtual trainers that can walk you through a task step-by-step.

3D Modeling

We’re experts in 3D modeling and bringing real-world objects into simulations to create the most realistic and authentic virtual environments possible. We use photogrammetry to create an image mesh and texture that can be reconstructed and translated into highly-realistic objects used in VR training.

XR training outperforms its 2D counterpart.

Numerous studies have found that XR improves the retention of material while substantially decreasing overall training time. These experiences can be customized to your specific needs. Instruction and guidance can come in the form of audio, contextual 2D text boxes or all the way to fully realized virtual trainers that can walk you through a task step-by-step.

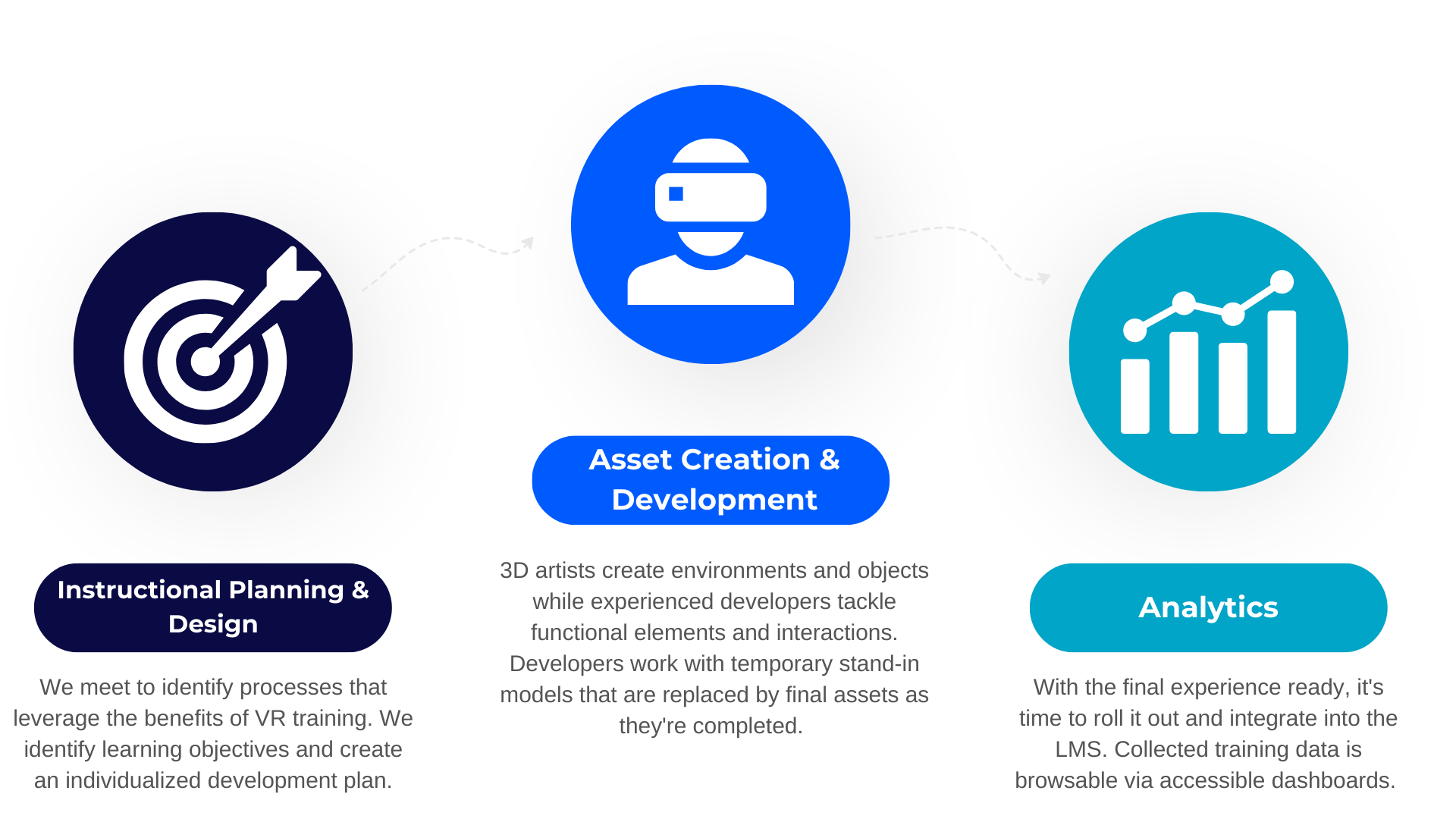

We make the training development process easy.

Our immersive experiences meet your instructional needs and provide a seamless user experience.

Our XR offerings include:

Case study: Can extended reality tools understand our emotions?

Our XR experts recently designed a voice activation solution specifically for Federal use that allows agents to use simple, conversational language to receive dynamic responses. Similar to Amazon’s Alexa, the tool queries the web as well as internal databases and resources to provide a contextually relevant answer, making agents more efficient.

This technology doesn’t just answer simple questions; it responds to human emotions and behavior for more effective results. Our tool uses AI to perform automated sentiment analysis which considers vocal tone, intonation, and facial recognition. Designed to detect positive or negative emotions, our tool dynamically changes the speed, content, and/or approach of its response based on agents’ perceived urgency or mood.

Meet Our Expert

Alex Fiel is Ellumen’s Creative Technologist. He specializes in all things XR, from building innovative, immersive virtual reality environments to using photogrammetry to create realistic, 3D images. Alex has a passion for using emerging technology in training to reduce costs, increase retention, and enact real behavioral change.

Want to learn more? Request an XR demo from our experts.